Scientists Find Way of Using WiFi to Monitor People Through Walls

Researchers working out of Carnegie Mellon University (CMU) in Pennsylvania (USA) have demonstrated a new way of using Machine Learning / AI and a deep neural network to map the position of human bodies, including through walls, by analysing the phase and amplitude of WiFi (wireless network) signals.

In recent years a fair bit of work has been done in the field of 2D and 3D “human pose estimation” – a way of identifying and classifying the joints in the human body – via RGB cameras, LiDAR, and radars (i.e. using sensors to try and identify the body position or movement of a person). Such things could be useful in fields like video gaming, healthcare, AR, and sports etc.

The issue is that doing this task with images (e.g. cameras) can be problematic as they’re often adversely affected by occlusion and lighting, while Radar and LiDAR technologies are highly specialised, expensive and require a lot of power. Instead, the CMU team decided to explore the use of regular WiFi antennas, and predictive deep learning architectures, for detecting body position.

Advertisement

“We developed a deep neural network that maps the phase and amplitude of WiFi signals to UV coordinates within 24 human regions. The results of the study reveal that our model can estimate the dense pose of multiple subjects, with comparable performance to image-based approaches, by utilizing WiFi signals as the only input. This paves the way for low-cost, broadly accessible, and privacy-preserving algorithms for human sensing,” said the DensePose from WiFi paper, which has yet to be peer-reviewed.

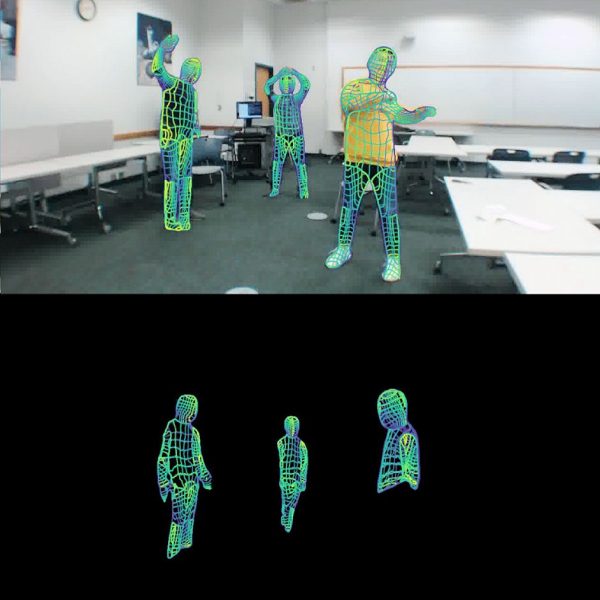

The idea of using WiFi signals to monitor an environment (like a room), and people within that area, is not new (example), although the data is often noisy and it can be difficult to visualise what somebody is actually doing. The CMU’s approach goes a step further by using DeepPose and machine learning technology to estimate what its targets are doing and then clearly visualising that (see picture).

The model they developed only requires two wireless routers, each with 3 antennas, in order to work via the regular 2.4GHz band. But you’d need to place these at opposite sides of your target and have full control over both of the units in order to gather the data. The weakness of WiFi signals also limits the range and accuracy is still an issue.

Summary of Study Results

We demonstrated that it is possible to obtain dense human body poses from WiFi signals by utilizing deep learning architectures commonly used in computer vision. Instead of directly training a randomly initialized WiFi-based model, we explored rich supervision information to improve both the performance and training efficiency, such as utilizing the CSI phase, adding keypoint detection branch, and transfer learning from an image-based model.

The performance of our work is still limited by the public training data in the field of WiFi-based perception, especially under different layouts. In future work, we also plan to collect multi-layout data and extend our work to predict 3D human body shapes from WiFi signals.

We believe that the advanced capability of dense perception could empower the WiFi device as a privacy-friendly, illumination-invariant, and cheap human sensor compared to RGB cameras and Lidars.

As it stands, the system still has trouble correctly representing rarely occurring body poses, and it struggles with situation where there are three or more concurrent people in one capture. But being able to harness a bigger data set for training will most likely iron a lot of this out by helping the learning model to correctly interpret the data it’s getting.

Advertisement

Mark is a professional technology writer, IT consultant and computer engineer from Dorset (England), he also founded ISPreview in 1999 and enjoys analysing the latest telecoms and broadband developments. Find me on X (Twitter), Mastodon, Facebook, BlueSky, Threads.net and Linkedin.

« CityFibre Expands Full Fibre Broadband to 2.5 Million UK Premises

Will not be abused in any way for tyrannical state surveillance *cough*

Bit unsubtle using the word targets…

Subjects then.. 🙂

“Such things could be useful in fields like video gaming, healthcare, AR, and sports etc.”

…anti-terror operations, freeing hostages…